Full-stack engineer

15 years across startups, agencies, and enterprise

Startup builder

6+ SaaS products shipped — Peekaboo, TicketToPR, SubredditSignals, Narrative Nooks, Locala, Mochi

Consulting

Enterprise SEO & local visibility tools (Next.js, real API integrations)

Now: AI agent architecture

Building products with teams of specialized AI agents

The realization

"I've been writing code for 15 years. But last year, I stopped writing code — and started managing agents that write it for me."

Not a newcomer experimenting with AI.

An experienced engineer who changed his entire workflow.

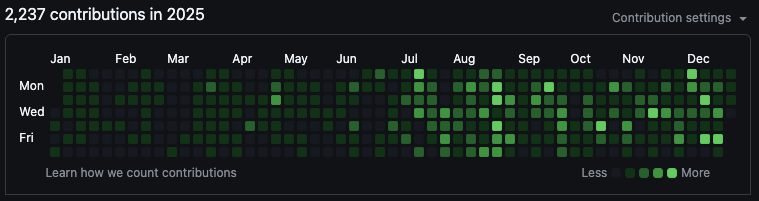

5 products. ~3 months. Solo.

commits (Peekaboo alone)

specialist AI agents

person

more issues in unreviewed

AI-generated code

The New Stack, 2026

more security

vulnerabilities

The New Stack, 2026

of AI code introduces

OWASP Top 10 vulns

Veracode, 2025

I review almost everything.

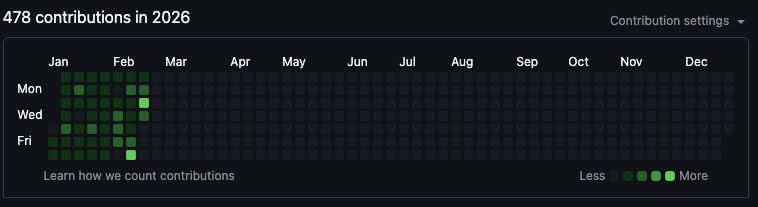

Claude Code

CLAUDE.md

Cursor

.cursorrules

OpenAI Codex

AGENTS.md

Something else? No AI tools yet? All valid.

These principles work across all of these tools. The handbook pattern, the guardrails, the review process — they're universal.

But my preference is Claude Code and the Claude CLI.

Claude Code — Opus 4.6 in the terminal

13 specialist agents — Peekaboo

I give the CLI a feature request. This is what happens — from my actual /.claude/agents/ directory:

Shared brain: Every agent reads CLAUDE.md (38KB) on every run. They don't call each other — they read the same constitution.

Token cost: Opus for complex decisions. Sonnet for implementation. A full spec-to-QA cycle runs $2–8 depending on scope.

| AI Tool | Config File |

|---|---|

| Claude Code | CLAUDE.md |

| OpenAI Codex | AGENTS.md |

| Cursor | .cursorrules |

| Windsurf | .windsurfrules |

| GitHub Copilot | .github/copilot-instructions.md |

| Google Gemini CLI | GEMINI.md |

| Your Company Handbook | AI Handbook |

|---|---|

| "Never share customer data" | "Never expose emails in API responses" |

| "All purchases need approval" | "Never install packages without asking" |

| "Follow the brand guidelines" | "Use the design system colors" |

| "Report incidents immediately" | "Log all errors with context" |

Every serious AI coding tool has converged on this pattern.

Human employees forget the handbook. AI employees read it every single time.

Path 1: Fully Automated

Low-hanging fruit, simple customer requests,

co-founder asks

I write a ticket. AI builds it. I review the PR.

Path 2: Hands-On + AI

DB migrations, cron jobs, complex user flows,

large architecture tickets

I drive. Claude writes tests, functions, edge cases.

Match the tool to the task. Not everything needs the same pipeline.

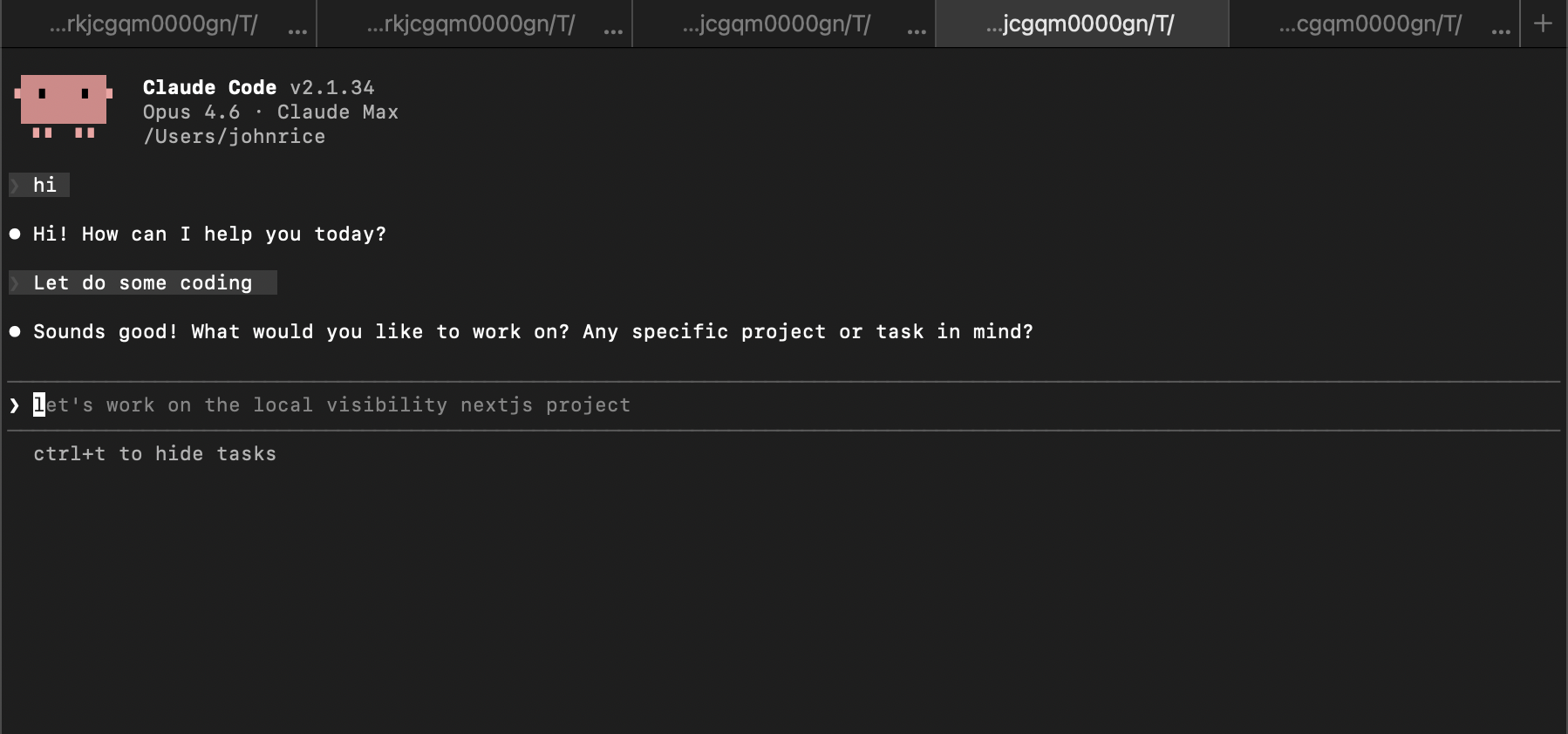

Plain English tickets in Notion. AI handles everything in between.

Behind the scenes (my terminal):

My total time

~7 minutes

Write the ticket + review the PR. That's it.

What needs hands-on:

How I work with Claude:

Claude writes:

I decide:

I'm not asking Claude to build the feature. I'm asking Claude to help me build it faster.

| AI Agent Team | Junior Developer | Agency | |

|---|---|---|---|

| Monthly cost | $100–300 | $5,000–8,000 | $10,000–25,000 |

| Available | 24/7 | Business hours | Business hours |

| Ramp-up time | Instant | 2–4 weeks | 1–2 weeks |

| Follows rules | Every time | Sometimes | Varies |

As a developer, you already apply KISS every day:

Over-engineered:

KISS:

One function, one job. One module, one concern. The same rule scales to agents.

The exact same principle applied to your AI team:

Vague mega-prompt:

Focused agent spec:

Simple doesn't mean short. Simple means unambiguous.

Unreviewed AI code — the hard data:

| Finding | Detail | Source |

|---|---|---|

| 45% fail OWASP Top 10 | AI code tested across 100+ LLMs | Veracode, July 2025 |

| 3% vs 21% secure | Devs with AI wrote secure code 3% of the time; without AI: 21% | Stanford / Dan Boneh, ACM CCS |

| +41% complexity | Persistent increase in code complexity after AI adoption | Carnegie Mellon, Nov 2025 |

| 19% slower | Experienced devs were slower with AI (predicted 24% faster) | METR Randomized Trial, 2025 |

| Only 10% scan AI code | 80% of devs bypass security policies for AI output | Snyk, 2025 |

The test is the contract between you and the agent.

Don't assume — verify. Have the system spin up a new agent

to review what the first agent built.

Think of it like a home inspection — you don't accept the work until every item checks out.

✓ Data looks right

Does the API return what we expect? Are the fields correct?

✓ Tests prove it works

Automated checks that say "yes, this does what it's supposed to"

✓ It runs in production

Not just on my laptop — it works when real users hit it

✓ You can explain WHY

If you can't explain the approach, there's probably a hidden bug

"Done" doesn't mean "it seems to work." It means it passed the inspection.

Don't guess. Measure. Then tell the agent what you measured.

What most people do:

What I do instead:

Agents make great decisions when you give them great data — not vague instructions.

Instructions can be forgotten. Hooks can't.

Why this rule exists:

Claude ran prisma db push --accept-data-loss on my production Peekaboo database.

Entire database — deleted.

The lesson:

Backups saved most of it. But some customer data was permanently lost. Every rule in this file was written in blood.

| Vibe Coding | Agent Engineering |

|---|---|

| No constitution | 38KB CLAUDE.md |

| No tests | Testing-first, agent-reviews-agent |

| No review | Human reviews every PR |

| No guardrails | Hooks + locked files + build gates |

| "It works on my machine" | Definition of Done enforced |

The engineering principles don't go away because the author is artificial.

Brand visibility analytics across 5 AI platforms. 1,162 commits, 13 agents.

Notion-to-PR automation. AI scores feasibility, builds features, creates PRs. On npm.

Reddit lead intelligence. AI-scored leads, sentiment analysis, estimated deal value.

AI-powered personalized children's stories. Custom illustrations and interactive reading.

Local business discovery platform. Location-based recommendations and search.

Reddit content strategy & scheduling. AI-powered subreddit analysis and auto-posting.

All built with the same approach: CLAUDE.md + specialist agents + human review.

10M+

sources analyzed

3M+

AI responses

5

AI models

24/7

automated

What Peekaboo tracks

How brands appear in ChatGPT, Gemini, Perplexity, Google AI Mode, and Google AI Overview. Competitive intelligence, visibility scoring, traffic estimates.

Traditional equivalent

3–5 developers, 4–6 months, $200K+ in salary. Built by one person + AI agents at a fraction of the cost.

Before

Write code all day

Debug all night

No time for product

After

Architecture & design

Product strategy

Code review & customer calls

Agents handle the implementation. You handle the thinking.

Unlike human team members, agents actually read the post-mortem document every single time.

Your job is knowing what to build.

Start with a CLAUDE.md. Add one rule. See what happens.

"Cursor and Claude Code are great at helping build software once it's clear what needs to be built. But the most important part is figuring out what to build in the first place."

— Y Combinator, Spring 2026

📖 AI Agent Playbook

Free templates, guides & examples

![]() Peekaboo

Peekaboo

aipeekaboo.com

![]() TicketToPR

TicketToPR

tickettopr.com

Thank you! Questions?